The American financial markets are in the throes of a revolution, not led by charismatic CEOs or bold hedge fund managers, but by algorithms. The integration of Artificial Intelligence (AI) and Machine Learning (ML) into trading—a domain often referred to as “AI Trading” or “Quantitative 2.0″—is fundamentally reshaping how capital is allocated, risk is managed, and profits are generated. This technological surge promises unprecedented efficiency, liquidity, and analytical depth. However, it also presents a labyrinth of novel risks, from inscrutable “black box” decision-making to the potential for AI-driven market crises that could unfold in milliseconds.

Standing at this new frontier is the U.S. Securities and Exchange Commission (SEC). Under the leadership of Chair Gary Gensler, a former MIT professor with a deep understanding of financial technology, the agency is grappling with a monumental task: how to regulate the unbridled innovation of AI without stifling it. The SEC’s approach is not merely reactive; it is proactively attempting to sculpt a regulatory framework that will define the future of American finance for decades to come. This article delves into the core of this complex interplay, exploring the promises and perils of AI trading and the SEC’s multi-pronged strategy to tame the frontier.

Part 1: The New Landscape – Understanding AI in Trading

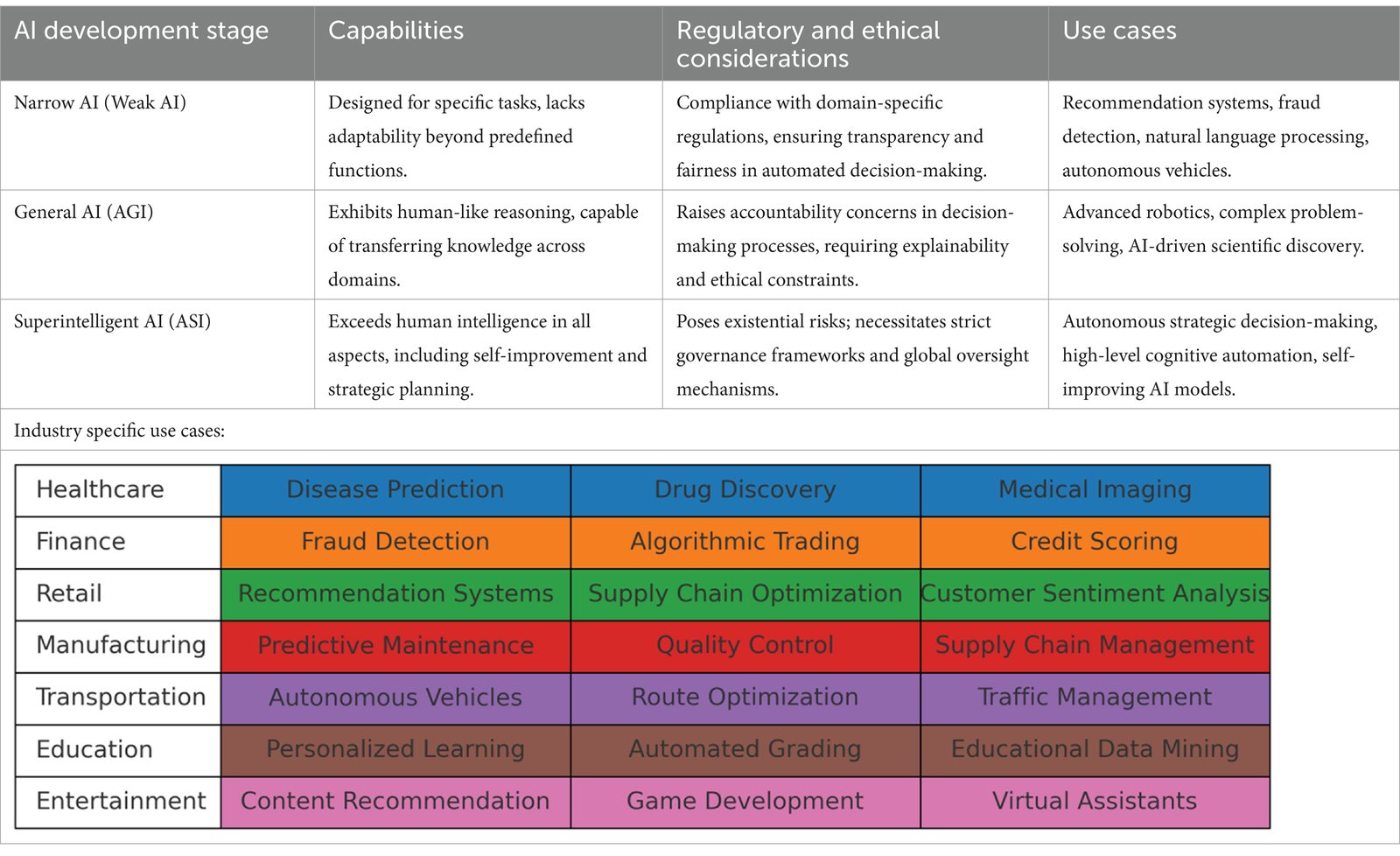

Before examining the regulatory response, it’s crucial to understand what we mean by “AI Trading.” It’s a broad term encompassing several sophisticated technologies:

- Machine Learning (ML) and Predictive Analytics: Algorithms that analyze vast historical and real-time datasets—news sentiment, social media, satellite imagery, economic reports, and market data—to identify patterns and predict price movements.

- Natural Language Processing (NLP): AI that can read, understand, and contextualize human language. This is used to parse earnings reports, regulatory filings, and news headlines to generate actionable trading signals.

- Reinforcement Learning: A type of ML where algorithms learn optimal strategies through trial and error, constantly refining their trading approaches based on rewards (profits) and penalties (losses).

- Deep Learning and Neural Networks: Complex systems modeled on the human brain, capable of identifying subtle, non-linear relationships in data that are invisible to traditional models.

The entities deploying these technologies range from established Wall Street banks and high-frequency trading (HFT) firms to a new wave of tech-driven hedge funds and even retail trading platforms incorporating AI-powered tools.

The Promised Land: Benefits of AI Trading

The proponents of AI trading point to significant benefits that enhance market quality:

- Enhanced Liquidity and Efficiency: AI-driven market makers provide constant buy and sell quotes, narrowing bid-ask spreads and making it easier and cheaper for all participants to trade.

- Superior Risk Management: AI can analyze complex portfolios in real-time, simulating millions of market scenarios to identify hidden risks and correlations far more effectively than human teams.

- Democratization of Sophisticated Tools: Retail-focused platforms now offer AI-driven analytics, portfolio optimization, and automated trading strategies that were once the exclusive domain of institutional players.

- Unbiased Decision-Making (In Theory): In an ideal world, AI could remove human emotional biases like fear and greed from trading decisions, leading to more rational markets.

Part 2: The Perils of the Algorithmic Frontier

For all its promise, the rapid adoption of AI introduces profound risks that keep regulators awake at night. Chair Gensler has repeatedly stated that AI is the “most transformative technology of our time,” on par with the internet, and that its associated financial stability risks are a central concern.

1. The “Black Box” Problem and Opacity

Many complex AI models, particularly deep learning networks, are inherently opaque. It can be difficult, if not impossible, for even their creators to fully explain why a specific decision was made. This inscrutability conflicts directly with fundamental regulatory principles of transparency, accountability, and auditability. How can the SEC mandate that a firm supervise its trading activities if the firm itself cannot fully articulate the logic behind its trades?

2. Digital Herding and Systemic Risk

The financial system is an ecosystem. If multiple major market participants employ similar AI models trained on similar data, they can begin to act in concert. This “digital herding” could lead to correlated, self-reinforcing market moves—both up and down. A sudden, AI-driven mass sell-off could create a “flash crash” on steroids, with liquidity evaporating in an instant and human traders unable to react in time. This homogeneity in models presents a significant systemic risk.

3. Data Integrity and “AI Hallucinations”

AI models are only as good as the data they are trained on. Biased, incomplete, or maliciously manipulated data will lead to flawed and potentially dangerous trading outcomes. Furthermore, the phenomenon of “hallucinations,” where generative AI models produce plausible but entirely fabricated information, could lead to trading decisions based on pure fiction.

4. Predictive Manipulation and “AI Wash Trading”

Sophisticated bad actors could use AI to manipulate markets in new ways. An algorithm could learn to execute a series of small, seemingly unrelated orders that, in aggregate, create a false technical signal, tricking other AI models into buying or selling. This “algorithmic manipulation” is far more subtle and difficult to detect than traditional pump-and-dump schemes. Similarly, AI could be used to conduct complex, high-volume “wash trading” to create artificial volume and interest in an asset.

5. Conflicts of Interest at Scale

This is a primary focus of the SEC’s current rulemaking. On platforms like robo-advisors or brokerages that use AI-powered optimization tools, an inherent conflict arises. The AI may be tuned to optimize for a goal that benefits the platform—such as increasing trading frequency, directing orders to a specific venue for payment (payment for order flow), or favoring proprietary products—rather than achieving the best outcome for the client. An AI can embed this bias at a scale and subtlety that is impossible for a retail investor to detect.

Part 3: The SEC’s Regulatory Playbook – A Multi-Pronged Assault

The SEC’s approach to governing this new frontier is not centered on a single, landmark “AI Act.” Instead, it is a sophisticated, multi-pronged strategy that leverages existing regulatory frameworks while proposing new, targeted rules to address novel threats.

Prong 1: Enforcement Actions – Policing the Existing Laws

The SEC is sending a clear message through enforcement: existing securities laws absolutely apply to AI. The agency is not waiting for new legislation; it is aggressively using its current authority to police misconduct involving AI.

- The Case of AI “Hallucinations”: In a landmark case, the SEC charged two investment advisory firms with making false and misleading statements about their use of AI. The SEC alleged that the firms heavily promoted their use of AI and ML models in marketing materials, but in reality, their investment decisions were not driven by these advanced technologies as claimed. This action underscores the SEC’s focus on ensuring that disclosures about AI are truthful and not merely marketing hype.

- AI-Driven Fraud: The SEC has also brought cases where AI was used as a tool for outright fraud. In one instance, the SEC charged a fund manager with using an AI “to manipulate stock prices,” highlighting that using technology to engage in classic market manipulation schemes does not immunize bad actors from prosecution.

These enforcement actions serve as critical precedent, establishing that “because the AI did it” is not a valid legal defense.

Prong 2: Rulemaking – Proactive Regulatory Shields

Beyond enforcement, the SEC is proactively crafting new rules designed to mitigate the specific risks posed by AI.

- The Proposed Conflict of Interest Rule: This is arguably the most significant and direct regulatory move against an AI-specific risk. In July 2023, the SEC proposed a new rule that would prohibit broker-dealers and investment advisers from using “covered technologies” in investor interactions that place the firm’s interests ahead of the investor’s interests. The term “covered technologies” is defined broadly to include AI, ML, and NLP.

- The Core Mandate: Firms would be required to identify and eliminate—or neutralize the effect of—any such conflicts of interest. This places a heavy burden of compliance on firms to rigorously evaluate their AI systems for hidden biases that favor the firm.

- Impact: This rule would fundamentally change how AI is deployed on client-facing platforms. It moves beyond simple disclosure (“our AI may have conflicts”) to a proactive duty to eradicate those conflicts.

- Market Structure Reforms: While not exclusively about AI, the SEC’s sweeping set of proposed reforms to U.S. equity markets directly impact the HFT and algorithmic trading world. Rules like the “Order Competition Rule” and enhanced transparency requirements for stock exchanges and market makers are designed to create a fairer playing field, partly in response to the perceived advantages and potential anti-competitive effects of ultra-fast, AI-driven trading.

Prong 3: Oversight and Examination – Scrutinizing the “Black Box”

The SEC’s National Exam Program has made the use of AI and complex algorithms a key examination priority. Examiners are now trained to ask probing questions of financial firms:

- Governance: Who is responsible for the AI model? What is the model governance framework?

- Data & Model Validation: What data was used to train the model? How is the model’s performance and fairness continuously tested and validated?

- Explainability: Can the firm explain, in understandable terms, how its AI makes decisions?

- Controls: What “circuit breakers” and kill switches are in place to halt the AI if it behaves erratically?

This supervisory pressure forces firms to build robust internal controls around their AI systems, effectively making them partners in the regulatory process.

Part 4: The Gensler Doctrine – A Philosophical Framework

Gary Gensler’s personal expertise in fintech has shaped a coherent philosophy towards AI regulation, which we can term the “Gensler Doctrine.” Its key tenets are:

- Technology is Neutral, but Outcomes Are Not: The SEC does not seek to regulate AI as a technology. Its focus is on the financial outcomes and systemic risks that AI can produce. The tool itself is not the target; its misuse is.

- The Primacy of Existing Law: The securities laws of the 1930s, built on principles of anti-fraud, anti-manipulation, and fiduciary duty, are remarkably resilient and technology-agnostic. They provide a solid foundation for tackling new technologies.

- The Peril of “Network Effects”: Gensler frequently warns about the danger of a “monoculture” where a few foundational AI models are used by the vast majority of market participants. This concentration creates a single point of failure that could threaten the entire financial system.

- The Need for “Regulatory Vigilance”: The SEC cannot afford to be a passive observer. It must invest in its own technological capabilities—hiring data scientists and AI experts—to keep pace with the industry it regulates.

Read more: The American Dream Retirement: A Step-by-Step Guide to Getting Started in Your 20s, 30s, and 40s

Part 5: The Road Ahead – Challenges and Unanswered Questions

The SEC’s path is fraught with challenges. The balance between fostering innovation and ensuring stability is delicate.

- The Innovation vs. Regulation Dilemma: The financial industry argues that overly prescriptive rules, like the proposed conflict of interest rule, could stifle innovation, drive business offshore to less regulated jurisdictions, and ultimately harm American competitiveness.

- The Technical Feasibility of Compliance: Can the “black box” problem ever be fully solved? Requiring complete explainability for every AI decision might be technologically impossible, forcing the SEC to accept certain levels of opacity if robust governance and controls are in place.

- Jurisdictional Complexity: AI regulation touches multiple agencies beyond the SEC, including the Commodity Futures Trading Commission (CFTC), the Consumer Financial Protection Bureau (CFPB), and even White House initiatives on AI safety. Coordinating a coherent national policy is a monumental task.

- The Global Dimension: Financial markets are global. A regulatory approach that is too stringent in the U.S. could simply push AI trading activity to financial centers in Asia or Europe, creating regulatory arbitrage without reducing global systemic risk.

Conclusion: Navigating the Frontier

The integration of AI into American trading is an unstoppable force. It holds the potential to create more efficient, liquid, and intelligent markets. However, this technological frontier is wild and untamed, populated with new and poorly understood risks.

The SEC, under Gary Gensler’s technocratic leadership, is acting as the sheriff, surveyor, and town planner of this new territory. Its strategy—a blend of aggressive enforcement of old laws, proactive writing of new rules, and intense supervisory scrutiny—represents the most comprehensive attempt in the world to govern AI in finance.

The ultimate success of this endeavor will not be measured by the absence of AI-driven incidents, but by the resilience of the system when they inevitably occur. By forcing transparency, demanding accountability, and relentlessly focusing on investor protection and systemic risk, the SEC is not trying to stop the AI revolution. It is trying to ensure that as we charge into this new era, the markets remain fair, orderly, and trustworthy for all who participate. The future of AI trading in America is being written not just in Silicon Valley coding rooms, but equally in the hearing rooms and regulatory offices of the SEC.

Read more: Building Your First AI Trading Strategy: A Step-by-Step Framework for US Markets

Frequently Asked Questions (FAQ)

Q1: Is AI trading illegal?

A: No, the use of AI in trading is not illegal. It is a rapidly growing and legitimate field. However, using AI to engage in illegal activities—such as market manipulation, fraud, or violating fiduciary duties—is absolutely illegal. The SEC’s enforcement actions demonstrate that it will apply existing securities laws to misconduct involving AI.

Q2: As a retail investor, how am I protected from risky or manipulative AI trading?

A: You are protected through several layers of SEC regulation:

- Enforcement: The SEC actively pursues firms that use AI to defraud or mislead investors.

- Disclosure Rules: Public companies and funds must disclose material risks, which could include their reliance on or exposure to AI models.

- Proposed Rules: The SEC’s proposed conflict of interest rule is specifically designed to prevent platforms from using AI that puts their interests ahead of yours.

- Market Oversight: The SEC monitors the markets for manipulative trading patterns, including those potentially generated by AI.

Q3: What is the “black box” problem, and why is it such a big deal for regulators?

A: The “black box” problem refers to the inability to easily understand or explain the internal decision-making process of a complex AI model. For regulators, this is a major concern because it conflicts with core principles of market regulation:

- Accountability: If a firm cannot explain why its AI made a trade that manipulated the market or harmed a client, it is difficult to hold the firm accountable.

- Auditability: Regulators need to be able to audit systems to ensure compliance. An inscrutable system is nearly impossible to audit effectively.

- Supervision: Firms have a legal duty to supervise their trading activities. The “black box” makes effective supervision profoundly challenging.

Q4: Has the SEC banned any specific uses of AI in trading?

A: As of now, the SEC has not issued an outright ban on any specific AI technique. Instead, its approach is “technology-neutral,” focusing on the outcomes and uses of the technology. The proposed conflict of interest rule would effectively restrict the use of AI in contexts where it creates an undisclosed conflict, but it does not ban AI outright.

Q5: What is the single biggest risk of AI trading, according to the SEC?

A: While the SEC highlights multiple risks, Chair Gensler has most frequently and forcefully pointed to systemic risk as the paramount concern. He worries that the widespread reliance on a few similar AI models could lead to “digital herding,” where many actors simultaneously make the same decision, potentially triggering a rapid, deep, and uncontrollable market crisis.

Q6: Is the SEC equipped with the technical expertise to regulate something as complex as AI?

A: This is a key challenge. The SEC has acknowledged this and is actively working to bolster its technical talent. It has been hiring more data scientists, quantitative analysts, and technology specialists. However, competing with the private sector for top AI talent is difficult. The agency’s ability to continue building this internal expertise will be critical to its long-term effectiveness.

Q7: Where can I find the SEC’s official statements and proposed rules on AI?

A: The best source is the SEC’s official website, sec.gov. You can search for:

- Speeches and Public Statements: Chair Gensler’s speeches often detail his views on AI.

- Proposed Rules: Search for “Environmental, Social, and Governance (ESG) Disclosure” and related releases for the conflict of interest rule (it was proposed under a broader initiative).

- Press Releases: These announce enforcement actions related to AI and other technologies.